- Home

- Details

- Registry

- RSVP

- Mestrenova 10 crack

- Death by degrees escape

- Liberal crime squad 2017

- Autotune 7 wintorrent

- Hotpoint stove replacement drip pans

- Second law of thermodynamics evolution argument

- Weird bump in the middle of my forehead

- Scanning with artec studio

- Brocade san switch models

- Recording in rekordbox dj

- How is the price for grains figured in farming simulator 11

- Patch for adobe cc 2019

- Hindi drama script school

- Excel linear regression with only certain points

- Nero 2014 platinum trial serial

- Samsung portable ssd t7 software

- Telugu christian songs download

- Pes 2017 apk data

- Logstash config snmp trap receiver

- Chasing cars song genre

- Sim card reader software for windows 10

- Happy bday abcd 2

- How to get past chegg reddit

- Imperium galactica 2 windows 7 patch

- Tangga nada lagu barat

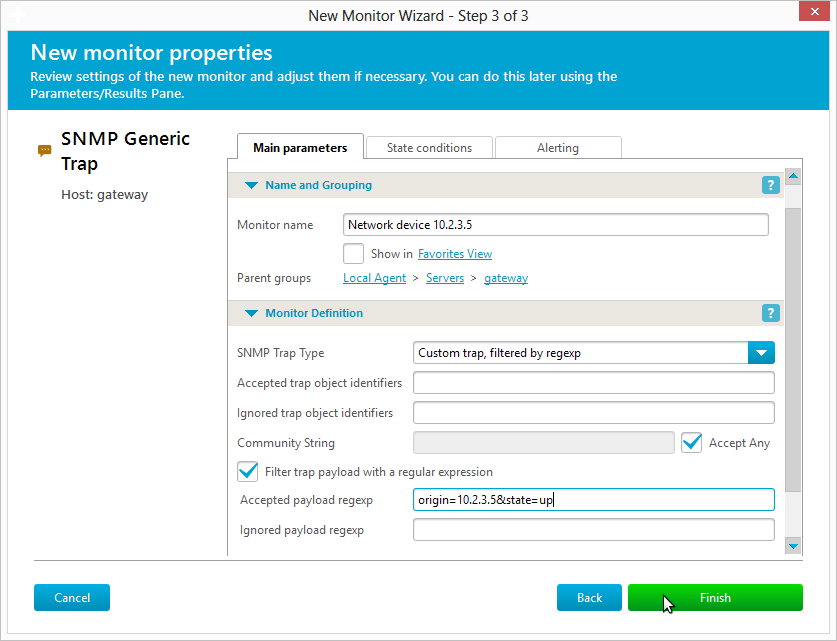

#LOGSTASH CONFIG SNMP TRAP RECEIVER INSTALL#

Sudo yum install syslog-ng-libdbi syslog-ng -y Stop and disable rsyslog and install syslog-ng: On a default installation of Centos 6.5, first we need to install Extra Packages for Enterprise Linux (EPEL). Centos ships with rsyslog, but I think the syslog-ng configuration is much easier to understand and configure. Setting Up syslog-ng:įor a central syslog server I chose Centos 6.5 running syslog-ng. You are only limited by the amount of storage available. If you have more disk space to throw at Elasticsearch, then you could keep much more than a few weeks. In the event that there was an audit or a security incident you could search the old data in the raw syslog files or pull in old data into ELK. Because of this, I chose to store them in gzipped text files for 90 days, and only have a few weeks indexed and searchable with ELK. Native syslog logs take up less storage than logs processed with Logstash and Elasticsearch. Certain types of compliance standards, like PCI-DSS, require that you keep logs for a certain period of time. For that reason I will use a standard syslog server for this post. You can actually collect syslogs directly with Logstash, but many places already have a central syslog server running and are comfortable with how it operates.

Kibana - Interact with the data (via web interface).Elasticsearch - Index and store the data.

If you need additional performance or need to scale out, then the roles should be separated onto different servers. Fourth, Kibana is a means to interact and search through the data stored in Elasticsearch.įor the sake of simplicity, all roles will be installed on a single server. Third, Elasticsearch is indexing and storing the structured data for instantaneous search capability. Second, Logstash is filtering and parsing the logs into structured data. First, the syslog server is collecting the raw, textual logs. To understand how a syslog goes from text to useful data, you must understand which components of ELK are performing what roles. There are some things you can do in that arena, but that is beyond the scope of this post. ELK is not really meant for up/down alerting or performance metrics like interface utilization. VOIP provider accidentally routed all voice traffic into our networkįocusing just on network operations, ELK is great for capturing, parsing, and making searchable syslogs and SNMP traps. In the examples below, our NOC was able to see issues before anyone even picked up the phone to report the issue. It's dynamic, so you can build a dashboard that is useful for your use case. With ELK, building a dashboard this amazing takes less than a half an hour. While this guide is solely meant to show how network data can be captured and used, the real goal is to have all infrastructure and applications log to ELK as well.īelow are some screenshots showing real-time dashboards that would be useful in a NOC environment. For long time this has been acceptable, but things must change. Some companies go one step further and are logging syslog to a central server. Many environments just have the basics covered (up/down alerting and performance monitoring). It was really impressive and I thought of how useful it could be for network operations. In recent months I have been seeing a lot of interest in ELK for systems operations monitoring as well as application monitoring. ELK is actually an acronym that stands for Elasticsearch, Logstash, Kibana. This post will discuss the benefits of using it, and be a guide on getting it up and running in your environment. What is ELK?ĮLK is a powerful set of tools being used for log correlation and real-time analytics. The updated article utilizes the latest version of the ELK stack on Centos 7. Check out the latest version of this guide here.

- Home

- Details

- Registry

- RSVP

- Mestrenova 10 crack

- Death by degrees escape

- Liberal crime squad 2017

- Autotune 7 wintorrent

- Hotpoint stove replacement drip pans

- Second law of thermodynamics evolution argument

- Weird bump in the middle of my forehead

- Scanning with artec studio

- Brocade san switch models

- Recording in rekordbox dj

- How is the price for grains figured in farming simulator 11

- Patch for adobe cc 2019

- Hindi drama script school

- Excel linear regression with only certain points

- Nero 2014 platinum trial serial

- Samsung portable ssd t7 software

- Telugu christian songs download

- Pes 2017 apk data

- Logstash config snmp trap receiver

- Chasing cars song genre

- Sim card reader software for windows 10

- Happy bday abcd 2

- How to get past chegg reddit

- Imperium galactica 2 windows 7 patch

- Tangga nada lagu barat